![]()

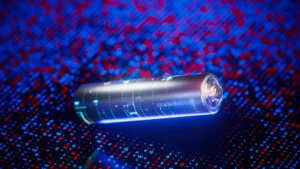

Engineers at Rice University have built a small radar sensor that could make self-driving cars significantly safer on real roads. The device, called EyeDar, is roughly the size of an orange and runs on low power, which makes it possible to deploy in places where space and energy are limited.

The idea behind it is simple. Self-driving vehicles already carry their own sensors, but those sensors have weak spots. Blind spots, busy intersections, and low-visibility conditions like fog or heavy rain can all reduce how well a car’s onboard system detects what’s around it.

EyeDar is designed to fill those gaps by acting as an additional set of eyes placed outside the vehicle, feeding it with better information about its surroundings.

It uses millimeter-wave radar, which works in conditions where cameras and lidar tend to struggle, making it a useful addition to the existing mix of technologies that autonomous vehicles rely on.

EyeDAR And The Existing Sensors

Most self-driving cars use a combination of cameras, LiDAR, and radar to build a picture of what’s around them.

Cameras and LiDAR do a decent job in good conditions by giving the vehicle detailed spatial data to work with. But when rain, fog, or darkness enters the picture, their performance drops significantly. That’s where radar steps in, since it handles poor weather and low light better than the other two.

You may also like: Apple CarPlay May Soon Support ChatGPT and Other AI Assistants

The problem is that radar isn’t perfect either. A large portion of the signal it sends out scatters before it can bounce back usefully. The result is that stationary objects or pedestrians who aren’t directly in the radar’s path can go undetected until there’s very little time to react.

EyeDAR was built to address exactly that gap. Rather than replacing existing sensors, it works with them to cover the blind spots that cameras, LiDAR, and radar consistently miss.

How does EyeDAR work

EyeDAR is the work of Kun Woo Cho, a researcher working under Ashutosh Sabharwal, a professor of electrical and computer engineering at Rice University. What makes it stand out is how it pairs a simple hardware design with efficient signal detection, without the need for complex or expensive components to do it.

The device is roughly the size of an orange. That small footprint matters because it means EyeDAR can be mounted on existing road infrastructure, like lamp posts or traffic signals, without major installation work. Keeping the hardware compact directly keeps deployment costs down and makes it easier to cover more ground.

You may also like: Nissan Brings Qi2 Wireless Charging to 2026 Pathfinder and Murano: Here’s What’s New

On the technical side, EyeDAR uses millimeter-wave radar, which works consistently across all weather and lighting conditions.

Inside the casing, a 3D-printed Luneberg lens sits at the center, surrounded by an antenna array. That combination naturally concentrates incoming radar signals onto the detection elements, which is what allows EyeDAR to pick up objects that standard radar setups tend to miss.

Because EyeDAR handles most of the direction-finding calculations directly in the hardware, it processes target locations hundreds of times faster than conventional radar systems. That speed matters in real-world driving, where a fraction of a second can be the difference between a safe stop and a collision.

One of its more practical features is how it feeds radar data back to the vehicle in a format self-driving systems can actually use. But the technology isn’t limited to cars. Robots, drones, and wearable devices can all benefit from the same hardware.

Its compact size and efficiency at the hardware level make it a strong candidate for wider adoption. For autonomous vehicles to operate safely on public roads, every sensor gap needs a reliable fix. EyeDAR looks like a serious answer to one of the more persistent ones.